@VideoDad Glad to hear it - I was thinking about you when I posted that haha.

Yes, it will all be part of the same update. You could always make a copy of your directory and then spin up a duplicate on another port to run the upgrade on.

I have both my database dump as well as some from others that I haven’t gotten to yet (thanks all for sending). There are a bunch of tests for almost every functionality now that will run every time an update is made to try to ensure that everything functions as it should.

I’d be surprised if any of the existing features stopped working properly. The main thing that is slightly iffy is the whole database migration. My tests will ensure that it succeeds on all the dumps I have, but since people have run some commands altering their databases, I can’t be completely positive it will work without issue. That being said, I did add a check first to verify that your current schema is as the code expects and can take the migration. If for some reason it were different and couldn’t upgrade, it should just tell you it can’t do it. It won’t try anyways and then break it.

Another note about this automation functionality - I still don’t love the “redirect”/rewrite to correct plate functionality that several requested, but since it’s an easy thing to add here now, I will add an edit plate number action to the automations. I like this because it doesn’t require me to explicitly build in and support that workaround, but still offers a solution for those who wanted it. If you now want to create an “automation” on your system that happens to try to correct misreads automatically, nothing will stop you from doing that.

I’m still not finished. Didn’t make as much progress as I wanted yesterday. Claude Opus has been really hit or miss for me lately. One day it works incredibly well - the next it’s like it’s a completely different model. All with the same prompts.

Ended up having to do a lot of the automations stuff manually because of this, so I didn’t get to much else.

The outstanding items as of now that I still want to add are the following:

- Stolen car check

- Decide how to do the embeddings for semantic search

- Backfilling detections from blue iris for new installs

- Back up/export data

- Add that crazy data table I shared before as an advanced mode to allow for a more “power user” level viewing and search for the live feed.

- A system monitoring page. My BI randomly dies every once in a while and it often takes me a bit to notice. I want to add simple health checks for BI and CPAI so that I can get a notification if they ever go down. Additionally this page will show load stats for the computer as well as graph the inference time for the AI to show if performance starts degrading or other trends.

Should be a nice big update for the first time in a while. I’ve built up a pretty horrendous track record for time estimates here, but just bear with me while I find time for this please

Any other suggestions welcome

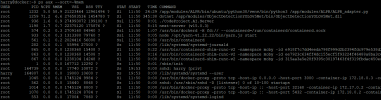

, Claude also found that the logging routine was really inefficient, and suggested a fix that it's implemented:

, Claude also found that the logging routine was really inefficient, and suggested a fix that it's implemented: