AI Good and Bad

- Thread starter bigredfish

- Start date

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Anthropic Claude AI convinced to hack Mexico data

cybersecuritynews.com

cybersecuritynews.com

Hacker Jailbreaks Claude AI to Write Exploit Code and Steal Government Data

A hacker exploited Anthropic's Claude AI chatbot over a month-long campaign starting in December 2025, using it to identify vulnerabilities, generate exploit code, and exfiltrate sensitive data from Mexican government agencies.

cybersecuritynews.com

cybersecuritynews.com

mat200

IPCT Contributor

- Jan 17, 2017

- 18,971

- 31,725

Dean W. Ball

@deanwball

A primer on the Anthropic/DoD situation

View attachment 238910

Dean W. Ball

@deanwball

A primer on the Anthropic/DoD situationoD and Anthropic have a contract to use Claude in classified settings. Right now Anthropic is the only AI company whose models work in classified contexts. The existing contract, signed by both parties and in effect, prohibits two uses of Anthropic’s models by the military:1. Surveillance of Americans in the United States (as opposed to Americans abroad).2. The use of Claude in autonomous lethal weapons, which are weapons that can autonomously identify, track, and kill a human with no human oversight or approval. Autonomous killing of humans by machines. On (2), Anthropic CEO Dario Amodei’s public position is essentially that autonomous lethal weapons controlled by frontier AI will be essential faster than most people realize, but that the models aren’t ready for this *today.*For Anthropic, these things seem to be a matter of principle. It’s worth noting that when I speak with researchers at other frontier labs, their principles on this are similar, if not often stricter.For DoD, however, there is another matter of principle: the military’s use of technology should only ever be constrained by the Constitution or the laws of the United States. One could quibble (the government enters into contracts, like anyone else), but the principle makes sense. A private company regulating the military’s use of AI also doesn’t sound quite right! So, the military has three options:1. They could cancel Anthropic’s contract and find some other frontier lab (ideally several) to work with.2. They could identify Anthropic a supply chain risk, which would ban all other DoD suppliers (I.e.: a large fraction of the publicly traded firms in America) from using Anthropic in their fulfillment of DoD contracts. This is a power used only for foreign adversary companies as far as I know. Activating this power would cost Anthropic a lot of business—potentially quite a lot—and give investors huge skepticism about whether the company is worth funding for the next round of scaling. Capital was a major constraint anyway, but this makes it much harder. This option could be existential for Anthropic. 3. They could activate Title I of the Defense Production Act, an authority intended for command-and-control of the economy during wars and emergencies. This is really legally murky, and without going into detail, I feel reasonably confident this would backfire for the administration, resulting in courts limiting the use of the DPA.Option 1 is obviously the best. This isn’t even close, and I say this as someone who shares DoD’s principled concerns about the control by private firms over the military’s use of technology.Even the threats do damage to the US business environment, and rightfully so: these are the strictest regulations of AI being considered by any government on Earth, and it all comes from an administration that bills itself (and legitimately has been) deeply anti-AI-regulation. Such is life. One man’s regulation is another man’s national security necessity.

Spying on citizens is kinda against the Constitution... so there's that

I can;t imagine that's legal, but those kind of little details no longer seem to matter.

If the current government wants to completely trash your company because you wont do business with them, oh well.

If the current government wants to completely trash your company because you wont do business with them, oh well.

Last edited:

mat200

IPCT Contributor

- Jan 17, 2017

- 18,971

- 31,725

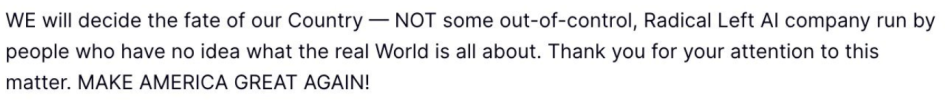

But of course he did…

wow .. calling Anthropic a Radical Left AI company .. I do not know of ANY radical left company that bids for a US Military contract.

Man, talk about unhinged rants

Guessing the mid-terms are gonna hit hard on Mr Trumpet

Given the contract was for only $200M .. my guess is that other AI firms may chose not to do contracts with these slanderers

funny comment I saw ..

Last edited:

tigerwillow1

Known around here

This is one time I decisively stand against the administration.

mat200

IPCT Contributor

- Jan 17, 2017

- 18,971

- 31,725

mat200

IPCT Contributor

- Jan 17, 2017

- 18,971

- 31,725

mat200

IPCT Contributor

- Jan 17, 2017

- 18,971

- 31,725

Arjun

IPCT Contributor

mat200

IPCT Contributor

- Jan 17, 2017

- 18,971

- 31,725

Reckless and dangerous.

and yet .. the Pentagon continues to use Anthropic to wage war ..

This is insane