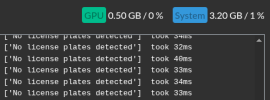

I'm doing LPR from 4 cameras in an office park, and feeding that into the ALPR Database.

I also have 20+ other cameras doing regular monitoring, and my CPU is hovering around 50-60% when there's not a lot of traffic, but during peak traffic times it can peg, especially when the Docker container for the ALPR database starts mishbeving.

I was thinking that offloading some of the AI burden might help. I was running CPAI on the same box as BI, and recently switched to BI 6's built-in AI.

Given that the built-in AI runs on the ONNX runtime, which uses DirectML, which requires only DirectX 12 support, it seems like there should be lots of options.

How much VRAM do I need for LPR? This card is only $50 at amazon, but it's got only 2 GB. For $57 I can get 4 GB. edit: that one's DirectX 11.

What's a decent minimum spec for this type of application? Does performance fall of a cliff with insufficient RAM? Or does it degrade gracefully?

Going to 8 GB puts me into far more expensive territory for a science experiment.

Thanks!

I also have 20+ other cameras doing regular monitoring, and my CPU is hovering around 50-60% when there's not a lot of traffic, but during peak traffic times it can peg, especially when the Docker container for the ALPR database starts mishbeving.

I was thinking that offloading some of the AI burden might help. I was running CPAI on the same box as BI, and recently switched to BI 6's built-in AI.

Given that the built-in AI runs on the ONNX runtime, which uses DirectML, which requires only DirectX 12 support, it seems like there should be lots of options.

How much VRAM do I need for LPR? This card is only $50 at amazon, but it's got only 2 GB.

What's a decent minimum spec for this type of application? Does performance fall of a cliff with insufficient RAM? Or does it degrade gracefully?

Going to 8 GB puts me into far more expensive territory for a science experiment.

Thanks!

Last edited:

As an Amazon Associate IPCamTalk earns from qualifying purchases.